about

About

ex)situ is an Inria research lab located at LISN (Laboratoire Interdisciplinaire des Sciences du Numérique, Ada Lovelace Building 650), Université Paris-Saclay, with permanent faculty from Inria, CNRS and the Université Paris-Sud. ex)situ explores the limits of human-computer interaction, specifically how extreme users interact with technology in extreme situations.

Directions to the lab

The lab is in the PCRI building, on the left. You can enter through the brown building on the right, Ada Lovelace (Building 650).

To arrive by public transit, take the RER B to Le Guichet. Head to the northern exit of the train station and turn right, and then right again at the intersection. To the left there's a walking ramp that goes down to the bus station. From here you can catch a #9 bus which will take you to the stop Université Paris-Saclay.

ViaNavigo is a good site for helping you plan your trip to and from PCRI.

If you are going by car, here is the location of the building on Google Maps.

Research Themes

Overview

Related Contracts

ex)situ is an Inria research team, joint with the Université Paris-Saclay, that focuses on improving our understanding of interaction in order to reinvent how we design and implement interactive systems.

Our focus is always on the user—especially extreme users who push the limits of existing technology and force us to reconsider our fundamental assumptions about interaction design. Emphasizing extreme users leads us to also consider extreme situations, where users face interaction challenges that cannot by addressed by standard interfaces. This leads us to extreme design, where we work with creative professionals to reinvent the design process.

Introduction

Interactive devices are everywhere: We wear them on our wrists and belts; we consult them from purses and pockets; we read them on the sofa and on the metro; we rely on them to control cars and appliances; and soon we will interact with them on living room walls and billboards in the city. Over the past 30 years, we have witnessed tremendous advances in both hardware and networking technology, which have revolutionized all aspects of our lives, not only business and industry, but also health, education and entertainment. Yet the ways in which we interact with these technologies remains mired in the 1980s. The graphical user interface (GUI), revolutionary at the time, has been pushed far past its limits. Originally designed to help secretaries perform administrative tasks in a work setting, the GUI is now applied to every kind of device, for every kind of setting. While this may make sense for novice users, it forces expert users to use frustratingly inefficient and idiosyncratic tools that are neither powerful nor incrementally learnable.

ex)situ

ex)situ explores the limits of interaction—how extreme users interact with technology in extreme situations. Rather than beginning with novice users and adding complexity, we begin with expert users who already face extreme interaction requirements. We are particularly interested in creative professionals, artists and designers who rewrite the rules as they create new works, and scientists who seek to understand complex phenomena through creative exploration of large quantities of data. Studying these advanced users today will not only help us to anticipate the routine tasks of tomorrow, but to advance our understanding of interaction itself. We seek to create effective human-computer partnerships, in which expert users control their interaction with technology. Our goal is to advance our understanding of interaction as a phenomenon, with a corresponding paradigm shift in how we design, implement and use interactive systems. We have already made significant progress through our work on instrumental interaction and co-adaptive systems, and we hope to extend these into a foundation for the design of all interactive technology.

We characterize Extreme Situated Interaction as follows:

Extreme users. We study extreme users who make extreme demands on current technology.

We know that human beings take advantage of the laws of physics to find creative new uses for physical objects. However, this level of adaptability is severely limited when manipulating digital objects. Even so, we find that creative professionals ––artists, designers and scientists–– often adapt interactive technology in novel and unexpected ways and find creative solutions. By studying these users, we hope to not only address the specific problems they face, but also to identify the underlying principles that will help us to reinvent virtual tools. We seek to shift the paradigm of interactive software, to establish the laws of interaction that significantly empower users and allow them to control their digital environment.

Extreme situations. We develop extreme environments that push the limits of today’s technology. We take as given that future developments will solve “practical” problems such as cost, reliability and performance and concentrate our efforts on interaction in and with such environments. This has been a successful strategy in the past: Personal computers only became prevalent after the invention of the desktop graphical user interface. Smartphones and tablets only became commercially successful after Apple cracked the problem of a usable touch-based interface for the iPhone and the iPad. Although wearable technologies, such as watches and glasses, are finally beginning to take off, we do not believe that they will create the major disruptions already caused by personal computers, smartphones and tablets. Instead, we believe that future disruptive technologies will include fully interactive paper and large interactive displays. Our extensive experience with the Digiscope WILD and WILDER platforms places us in a unique position to understand the principles of distributed interaction that extreme environments call for. We expect to integrate, at a fundamental level, the collaborative capabilities that such environments afford. Indeed almost all of our activities in both the digital and the physical world take place within a complex web of human relationships. Current systems only support, at best, passive sharing of information, e.g., through the distribution of independent copies. Our goal is to support active collaboration, in which users are engaged in the lifecycle of digital artifacts.

Extreme design. We explore novel approaches to the design of interactive systems, with particular emphasis on extreme users in extreme environments. Our goal is to empower creative professionals, allowing them to act as both designers and developers throughout the design process. Extreme design affects every stage, from requirements definition, to early prototyping and design exploration, to implementation, to adaptation and appropriation by end users. We hope to push the limits of participatory design to actively support creativity at all stages of the design lifecycle. Extreme design does not stop with purely digital artifacts. The advent of digital fabrication tools and FabLabs has significantly lowered the cost of making physical objects interactive. Creative professionals now create hybrid interactive objects that can be tuned to the user’s needs. Integrating the design of physical objects into the software design process raises new challenges, with new methods and skills to support this form of extreme prototyping.

Our long-term, admittedly ambitious, goal is to create a unified theory of interaction grounded in how people interact with the world. Our concepts of information substrates and human-computer partnerships offer a generative approach for supporting creative activities, from early exploration to implementation. The rest of this section presents our work around four themes: Fundamentals of Interaction, Human-Computer Partnerships, Creativity, and Collaboration. Individual project typically address multiple themes, and different members of the group work together to advance these different topics.

Collaboration

Related projects

Related Contracts

Our goal is to support collaboration among people and across systems as a fundamental element of interactive system design

Collaboration is ubiquitous yet is poorly supported by current computer systems. The personal computer was designed for single users and most current collaborative technologies, from e-mail to social networks, only support communication-based collaboration, where users exchange information and artifacts. With rare exceptions, sharing does not really mean sharing, it means sending a copy and losing control over it. We explore new ways to support collaborative interaction, especially within and across large interactive spaces, such as those of the Digiscope network.

The need for collaboration has never been so high, especially for extreme users. Some activities require combining the expertise of different users on well-defined tasks, such as analyzing scientific data, evaluating CAD models or scheduling complex events. Some activities require more flexible forms of collaborative interaction in order to improve creativity by brainstorming and combining ideas, such as product design, artistic creation or crisis management. Some activities require tight-loop collaboration, such as teaching or artistic performance, e.g. playing a concert with remote musicians. But because collaboration support is mostly an add-on to existing tools, none of the above examples can be conducted effectively today.

We believe that a paradigm shift as important as the switch from command-line interfaces to graphical user interfaces can occur if we make true sharing and collaboration a fundamental feature of the digital world. Just as graphical user interfaces demanded the creation of a new interaction style, namely direct manipulation, collaborative interfaces will demand the creation of new ways to support collaboration, in both real and deferred time, in both collocated and remote settings.

We have started to investigate how to support telepresence among large, heterogeneous interactive spaces. We also created Webstrates, an environment for exploring shareable dynamic media and the concept of information substrate. We plan to create novel collaborative systems that enable users not only to see the same artifact and to see each other, but also to act together. We will explore both the case where users interact together within the digital world, e.g. collaborative interaction on the same dataset, and the case where users interact together within the physical world through the computer, e.g. remote design or fabrication of physical objects.

We will take advantage of extreme environments to explore new ways to support collaborative interaction, especially within and across large interactive spaces such as those of the Digiscope platform. For example, by adding telepresence capabilities to large interactive spaces for remote collaboration, we expect to improve social presence and understanding of what each user is doing, therefore supporting richer interaction. We will also explore how to support rich collaboration and collaborative manipulation of tools or objects when users have different interaction capabilities either because they are using different devices in the same interactive space (distributed interaction) or because they are interacting from different interactive spaces (remote collaboration). Finally we will address the transitions among different collaboration modes, between real-time and deferred time, between co-located and remote collaboration, between collaborative and individual work.

Fundamentals of Interaction

Related Contracts

Our goal is to build upon a set of fundamental principles, including instrumental interaction, substrates and co-adaptive systems, to describe, evaluate and generate ideas for novel interactive systems

We are developing a set of design principles that provide the theoretical foundations for our research. These concepts are grounded in current understanding of human cognitive and sensori-motor capabilities and the potential of modern-day interactive computing. Together, they form a novel approach that both describes current interactive technology and also provides a generative approach for designing simpler, yet more powerful interactive systems. Our goal is to create a paradigm shift similar to that of moving from command-line interfaces to graphical user interfaces.

In order to better understand fundamental aspects of interaction, ExSitu will study interaction in extreme situations. We will conduct in-depth observational studies and controlled experiments which contribute to theories and frameworks that unify our findings and help us generate new, advanced interaction techniques. Although we will continue to explore the theory of Instrumental Interaction in the context of multi-surface environments extend it into the wider framework of information substrates. We will also continue to study elementary interaction tasks in large-scale environments, such as pointing and object manipulation.

Creating interactive systems is complex, and the available tools trail far behind the capabilities of the available hardware. Creating the kinds of systems we envision is even more challenging since we must create high-performance distributed systems running in heterogeneous environments using a variety of input and output devices while collecting and analyzing data in real time. In addition, the rapid prototyping cycles required by our Extreme Design approach imply the ability to quickly create and modify such systems.

To address this challenge, we need to better understand the interplay between the design process and the tools to create designs so that we can identify the appropriate tools and software infrastructure. In particular, one critical challenge that hinders extreme users when they want to appropriate and combine existing technologies is the lack of interoperability among tools and the lack of consistency within and across interactive systems, making it often impossible or excessively difficult to, e.g., combine different interaction styles or devices, transfer an interaction technique from a desktop computer to a mobile device, use different programming toolkits or access low-level settings of a device driver at the user level.

By identifying concepts and abstractions that work at multiple levels, we seek to address these challenges and facilitate the exploration, implementation and integration of novel interaction technologies and techniques. More concretely, we will develop concepts, methods and tools at three levels: the developer level, with new architectures and programming languages that promote openness for interaction through interoperability, the designer level, with new prototyping tools for creative and extreme prototyping, and the end-user perspective, with new configurable and co-adaptive systems.

Human-computer partnerships

Related projects

Related Contracts

Our goal is to create new forms of human-computer partnerships, where people take advantage of computational power while remaining in control

We design interactive systems based on three fundamental relationships between people and computers:

The computer may act as a:

- tool wielded by user

- servant that performs tasks for the user

- medium that interconnects two or more users

Human-computer interaction research traditionally focuses on the first, building interactive systems where the user controls the interaction. Artificial intelligence and machine learning traditionally focus on the second: building systems that solve problems for the user. Social media and other communication systems clearly serve the third, creating vast networks that interconnect humanity. We believe that a new paradigm can emerge by synthesizing these approaches to create new forms of human-computer partnerships.

Building upon the concept of co-adaptation, in which users both adapt their behavior according to the system, but also adapt the system, we introduce the concept of reciprocal co-adaptation, which involves any of the following four human-computer relationships:

- People adapt to computers: they learn to use the system and are constrained by its prescribed modes of use

- People adapt computers: they use the system in unexpected ways, appropriating features for new, unanticipated purposes

- Computers adapt to people: they can modify the interaction over time according to past user behavior

- Computers adapt people: they can shape the user's behavior helping users to learn (or persuade them to act)

We take advantage of the power of machine learning to not only model the users behavior to inform the system, but also to model the system's behavior to inform the user. Integrating these two approaches creates a reciprocal form of co-adaptation in which users take advantage of feedback (to understand what the system did) and feedforward (to understand what the system can do in the current context). Reciprocal co-adaptation can create particularly effective human-computer partnerships in which users are better informed of system capabilities and remain in control as they explore new possibilities.

2015-2018Creativity

Related projects

Related Contracts

Our goal is to support creative professionals, particularly artists, designers, and scientists, who push the limits of interactive technology

Ex)situ is interested in understanding the work practices of creative professionals, particularly artists, designers, and scientists, who push the limits of interactive technology. Our studies of these extreme users allow us to obtain empirical grounding for the theoretical concepts of instrumental interaction, information substrates and reciprocal co-adaption. We expect to transfer what we learn to the design of creative tools, first for expert users, then for non-specialists and non-professional users.

Research in Human-Computer Interaction (HCI) has moved beyond simply making technology more efficient and its users more productive: it now seeks to make people more creative by offering new expressive forms of interaction. We are interested in supporting the creative process of professional artists such as musicians, illustrators and car designers, but also of other professions such as scientists and doctors who must be creative in their everyday work. By addressing this diverse set of ‘lead users’, we also expect to transfer what we learn to the design of creative tools for non-specialists and non-professional users.

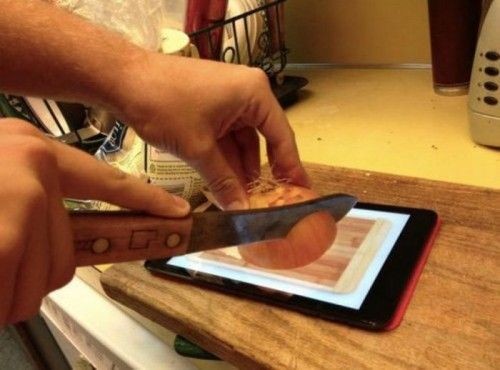

As our past studies have shown, professional artists still use traditional physical media in parallel with computers because they support forms of expression that digital tools cannot accurately reproduce, offering unique affordances, vocabularies and artistic styles. Unfortunately, physical and digital interfaces are difficult to integrate into a smooth, common workflow; each has distinct roles and serves different tasks. We hope to close this gap by creating novel user forms of interaction that combine physical and electronic tools.

Our earlier work explored how interactive paper technology can help professional composers transform their personal music representations as expressed on physical paper into music-programming software. More recently, we have been exploring how illustrators switch between computer and physical tools as they transition from early sketches to final illustrations. We have also observed how designers of concept cars communicate their designs to 3D clay modelers through sketches and physical models. We have also studied how doctors make complex diagnostic decisions through the use of paper-based and interactive emergency manuals in the operating room. Finally, we have started to study how movement experience in artistic disciplines such as dance can contribute to the design of novel movement-based interactions in HCI. We collaborate with professional choreographers to explore movement qualities and integrate them as a new interaction modality. The goal is to design interactive systems that support user agency, system guidance and novelty in the choreographic process.

While we plan to continue to enhance observed practices, we also plan to apply our findings to the fabrication of everyday objects. To this end, we will explore new interactive technologies that mix physical and virtual models during different phases of the fabrication process. We will also design tools and instruments that enable users to effectively interact with and switch among their different forms and representations. By providing similar tools and a similar environment to both designers and end-users, we will bridge the gap between design and use, expanding the scope of design to the full lifecycle of interactive artifacts, to better support creativity and reciprocal co-adaptation.